Rule-based security worked fine when your data lived behind a firewall and users worked from the office. Someone tries to access a restricted file? Block it. Login attempt from a blacklisted country? Deny it. Unusual port activity? Flag it.

The rules were static. The environment was predictable. And someone had to write every single rule, maintain it, and update it as your organization changed.

That model breaks down completely in cloud environments. Your team works from anywhere. They access dozens of SaaS applications. Roles shift, responsibilities evolve, and what's normal for one person looks nothing like what's normal for another. Writing rules for every possible scenario isn't just impractical. It's impossible.

This is where behavioral baselining changes the game. Instead of telling a system what's suspicious, you let it learn what normal looks like. Then anything that deviates stands out automatically.

The Problem with Rules

Traditional detection systems operate on if-then logic. If a user downloads more than 100 files in an hour, trigger an alert. If someone logs in from a new country, flag it. If an account accesses a sensitive directory, notify the security team.

Sounds reasonable until you consider the context. Your sales team might legitimately download 200 files before a big presentation. Your finance team accesses sensitive directories every day as part of their job. Rigid rules can't distinguish between legitimate work and actual threats. They treat every deviation as equally suspicious, which means your team drowns in false positives.

Rule-based systems also require constant maintenance. Your organization isn't static. People change roles, new tools get adopted, workflows evolve. Every change means updating rules, which assumes you have someone with the expertise and time to do it. Most lean IT teams don't. The result? Rules drift out of sync with reality. Alerts become noise. Real threats slip through.

What Behavioral Baselining Actually Means

Behavioral baselining flips the model. Instead of defining what's suspicious, the system learns what's normal for each user, then flags anything that doesn't fit that pattern.

The AI observes activity across your environment. Who accesses which files? When do they typically log in? What applications do they use? Which folders do they browse? How much data do they usually download? Over time, the system builds a profile of normal behavior for each user. Not a static profile, but a dynamic one that adapts as roles and responsibilities change.

When something genuinely unusual happens, it stands out immediately. The Head of Finance who never touches engineering documents suddenly downloads the entire product roadmap at 2 AM. That's not a rule violation. It's a behavioral anomaly. The system provides context: what was accessed, when it happened, and how this compares to the user's typical behavior.

What the AI Actually Tracks

Behavioral baselines focus on patterns, not content. The system isn't reading your emails or monitoring keystrokes. It's observing access and activity patterns:

Authentication patterns. When does this user typically log in? From which locations? A login at 3 AM from a new country isn't automatically suspicious. But if that user has never logged in outside business hours and suddenly starts accessing systems overnight from an unfamiliar location, that's worth investigating.

File access patterns. Which files and directories does this user normally work with? A finance team member accessing budget spreadsheets every day is expected. That same person suddenly browsing HR files or downloading customer databases is not.

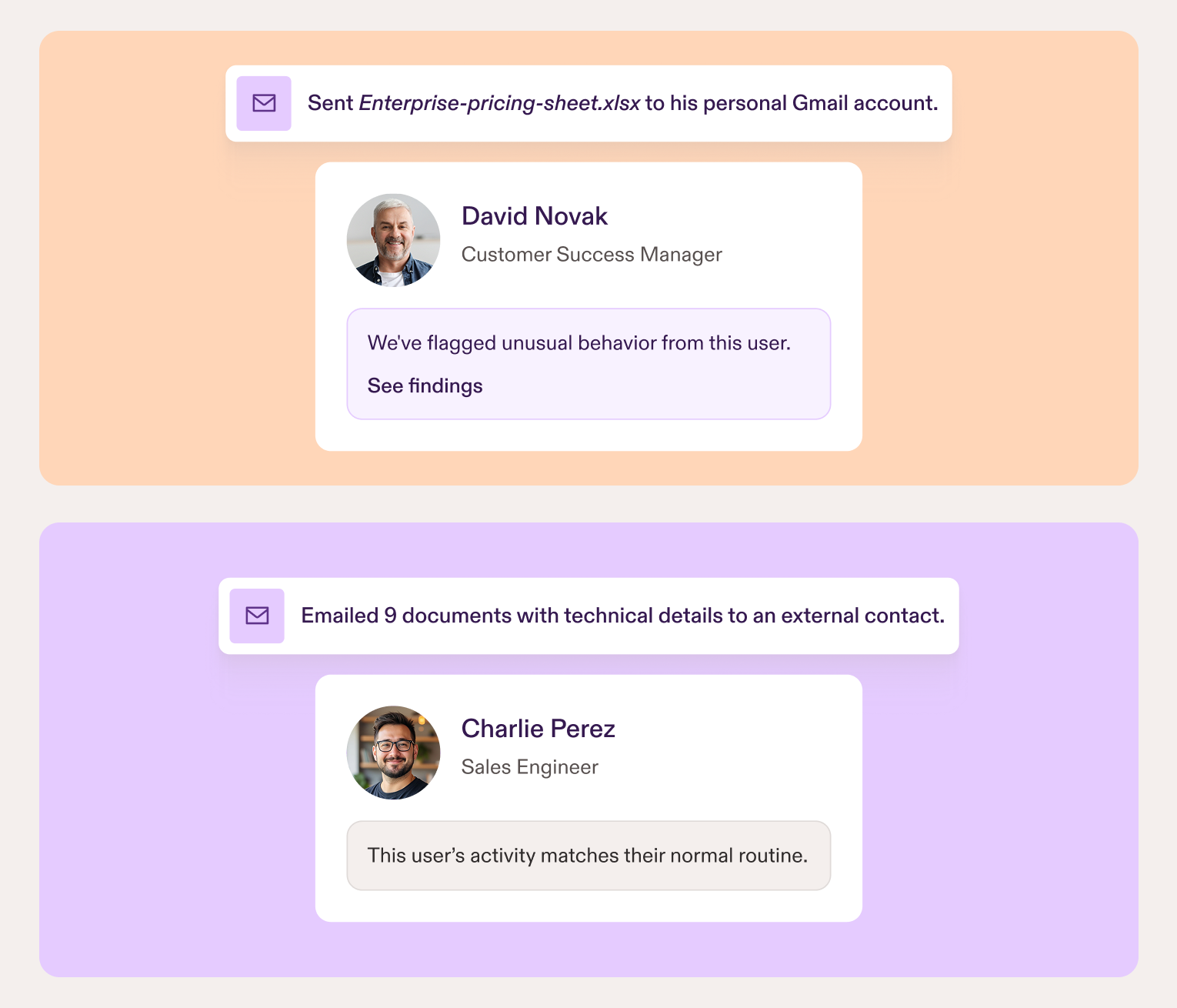

Data movement. How much data does this user typically download or upload? Where does it go? What kind of files are being moved? A developer downloading code repositories is normal. That same person emailing a 50MB file named "Q4_Financial_Results.xlsx" to a personal Gmail account is a red flag. The system recognizes patterns in file names that indicate sensitive content (terms like "salary," "confidential," "client list," or "budget") and factors this into risk assessment.

Collaboration patterns. Who does this user typically share files with? Sharing documents with colleagues on a project is routine. Sharing sensitive files with external email addresses that have never appeared in your system before is worth a closer look.

The system continuously learns and adapts. When someone's responsibilities change, it adjusts automatically. But it also knows the difference between gradual evolution and sudden suspicious shifts. This dramatically reduces false positives. Instead of 100 alerts a day where one might be real, you get alerts that warrant investigation.

Real Examples: Normal vs. Anomalous

Example 1: The Operations Manager

Normal behavior: Your operations manager logs in daily to review production schedules, access safety documentation, and coordinate with site supervisors. They work standard business hours, access manufacturing execution systems, and share reports with the production team.

Anomalous behavior: That same account logs in at 2 AM on a Sunday, starts accessing engineering drawings and process specifications they've never touched, and begins downloading technical documentation for equipment they don't oversee. The credentials are valid, but the behavior doesn't match any established pattern.

Example 2: The Finance Team Member

Normal behavior: Someone in finance accesses accounting software daily, downloads monthly reports, and shares spreadsheets with the CFO and other finance team members. They work typical business hours and follow predictable patterns.

Anomalous behavior: That same account suddenly starts accessing HR files containing employee salary data, downloads personnel records, and emails attachments to a personal Gmail address. None of these actions match their historical behavior, and they're happening during their notice period before leaving the company.

Example 3: The Maintenance Technician

Normal behavior: A maintenance technician accesses equipment manuals, logs work orders in your CMMS, and shares maintenance schedules with the facilities team. They typically work day shifts and access systems from the plant office or occasionally from home.

Anomalous behavior: Their account logs in over the weekend, downloads proprietary equipment calibration procedures, accesses supplier contract files they've never needed before, and uploads technical documentation to a personal cloud storage account. The volume, timing, and destination all deviate from established norms.

In each case, the anomalous behavior isn't caught by a rule. It's caught because it doesn't fit the pattern of what this specific user normally does.

How Presence Uses Behavioral AI

Presence connects to your Microsoft Entra ID and immediately begins observing activity across your Microsoft 365 environment. It learns what normal looks like for each user without requiring manual configuration or rule writing. When behavior deviates from established patterns, anomalies surface automatically with full context about what happened and why it's unusual. Canaries add an extra layer by catching compromised accounts the moment they take the bait, while low-risk event tracking highlights smaller security gaps before they escalate.

The system adapts as your organization changes, so you're not stuck maintaining detection logic or tuning thresholds. You get alerts that actually matter, backed by evidence that makes investigation straightforward.

See how Presence detects these scenarios in real-time. Email contact@pistachioapp.com to book a 15-minute demo and we'll walk through exactly how it works in your environment.