Insider threats don't always look like malicious actors. Sometimes it's a compromised account moving laterally through your environment. Other times it's an employee forwarding sensitive files before they leave. The challenge isn't just detecting these events. It's detecting them before damage occurs.

Most organizations assume they'd notice unusual activity. Without baseline behavior models or continuous monitoring, these incidents stay hidden until someone reports missing data, or a compliance audit surfaces the problem.

Here are three scenarios that happen more often than IT teams realize, and what detection looks like when it works.

Scenario 1: The Departing Employee

A mid-level employee accepts a role at a competitor. Two weeks before their last day, they start accessing files outside their normal scope. They download pricing models from SharePoint, export client lists from your CRM, and email attachments to their personal Gmail account.

By the time HR processes their exit, the data is already gone.

What makes this hard to catch: The account is legitimate. The actions (accessing files, sending emails) are normal workplace behavior. Without understanding what this person typically does, these actions don't stand out.

Scenario 2: The Compromised Account

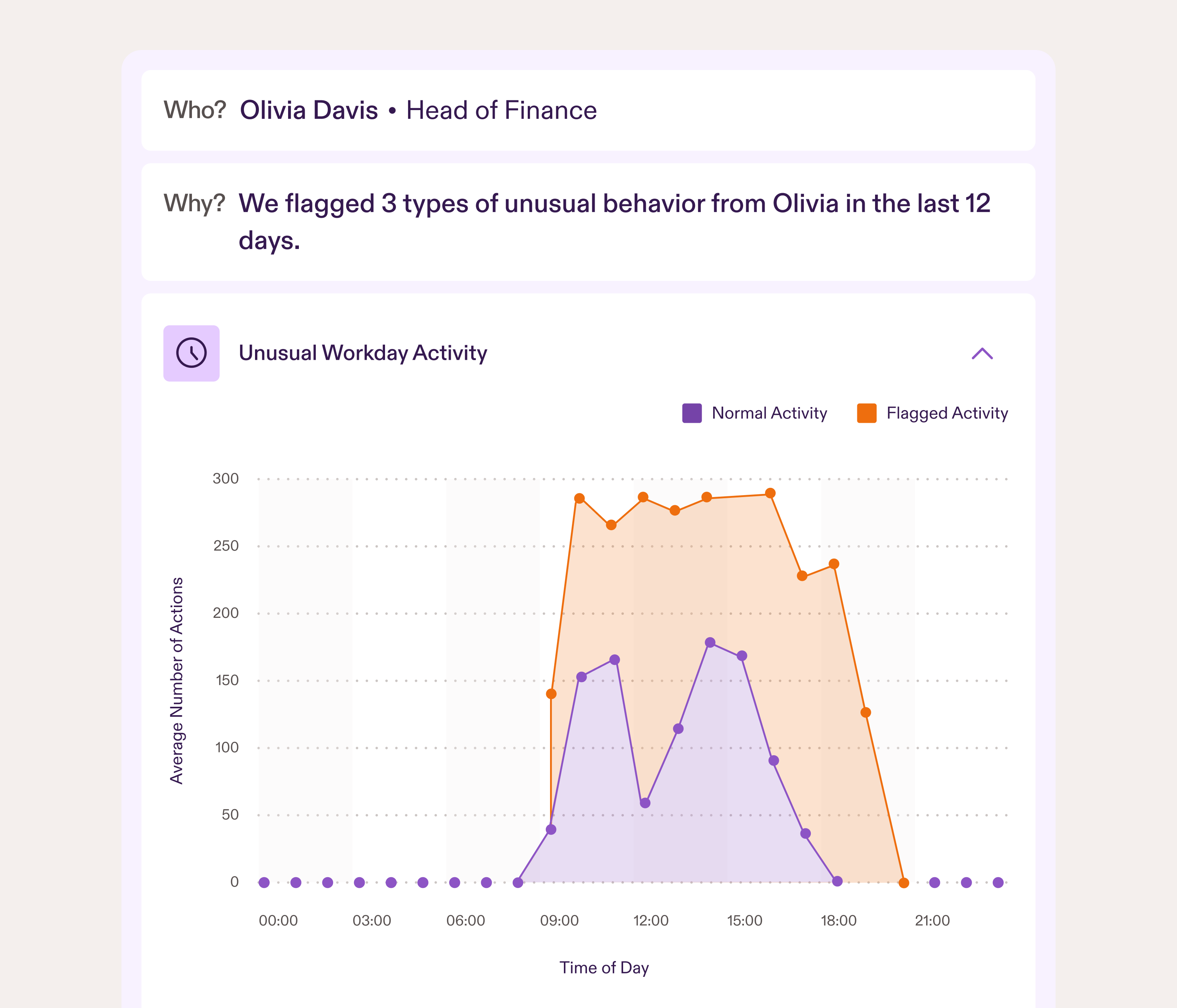

Your Head of Finance clicks a convincing phishing link. The attacker now has valid credentials and begins exploring your environment. They access files they've never touched, attempt logins outside business hours, and enumerate sensitive folders looking for financial data or customer information.

Because the credentials are real, traditional security tools see authorized access.

What makes this hard to catch: The account isn't behaving like the actual user, but without a baseline of normal behavior, how would you know? Compromised accounts often fly under the radar for weeks because the activity looks legitimate on the surface.

Scenario 3: The Accidental Exposure

A well-meaning team member needs to share a large dataset with an external consultant. Instead of using your secure file sharing process, they email it directly because it's faster. The file contains personally identifiable information (PII). The consultant's email account gets breached three months later, and your data ends up exposed in a credential dump.

You had no idea the data left your environment.

What makes this hard to catch: People find workarounds. Secure processes slow them down, so they use personal email, public file sharing links, or unauthorized apps. These actions aren't malicious, but they create risk.

Why Detection Fails (And What Actually Works)

The common thread across all three scenarios? Traditional security tools missed them because they rely on rules and signatures. Rules require someone to write them, tune them, and maintain them as your environment changes. That assumes you have a SOC or dedicated security analysts.

Most IT teams don't. They're managing infrastructure, handling tickets, and keeping systems running. Writing detection rules and investigating endless false positives isn't feasible.

This is where behavior-based detection changes the equation. Instead of rules, AI models baseline what normal looks like for each user across your Microsoft environment: who accesses what, when, and from where. When someone suddenly accesses files, they've never touched, logs in at 2 AM and browses unfamiliar directories, or emails sensitive data externally, the deviation is obvious to the model even if it's not obvious to your team.

Anomalies surface automatically with full context. You see what happened, when it happened, and why it's unusual for that specific user. No background in threat analysis required.

How Presence Handles These Scenarios

Presence tackles insider threats across multiple layers:

Behavioral AI learns your environment and flags anomalies in real time. When a departing employee starts accessing files outside their normal pattern or downloading bulk data, it surfaces as unusual activity with full context about what changed and why it matters.

Canaries protect admin accounts by planting realistic-looking trap emails in Outlook. If a compromised account clicks a canary link while searching for credentials or access points, you're alerted immediately that the account may be in the wrong hands.

Low-Risk Event Tracking surfaces everyday actions that create small security gaps, like storing passwords in shared documents or using work emails for personal services. These aren't threats yet, but they're the kind of exposure attackers look for. A seven-day rolling view keeps the focus on recent behavior, making it easy to address issues before they escalate.

All of this connects to your Entra ID in under 10 minutes. No agents, no complex configuration, no endless tuning. Presence starts learning immediately and only alerts you when behavior is credibly suspicious. You get visibility into insider risk without adding headcount or complexity.

Don't Wait for an Incident

Most organizations discover insider threats after the damage is done. A departing employee's access should have been monitored. A compromised account should have been caught earlier. Accidental exposure should have triggered an alert. The gap isn't awareness. It's tooling that works for lean teams.

See how Presence detects these scenarios in real-time. Book a 15-minute demo and we'll walk through exactly how it works in your environment.